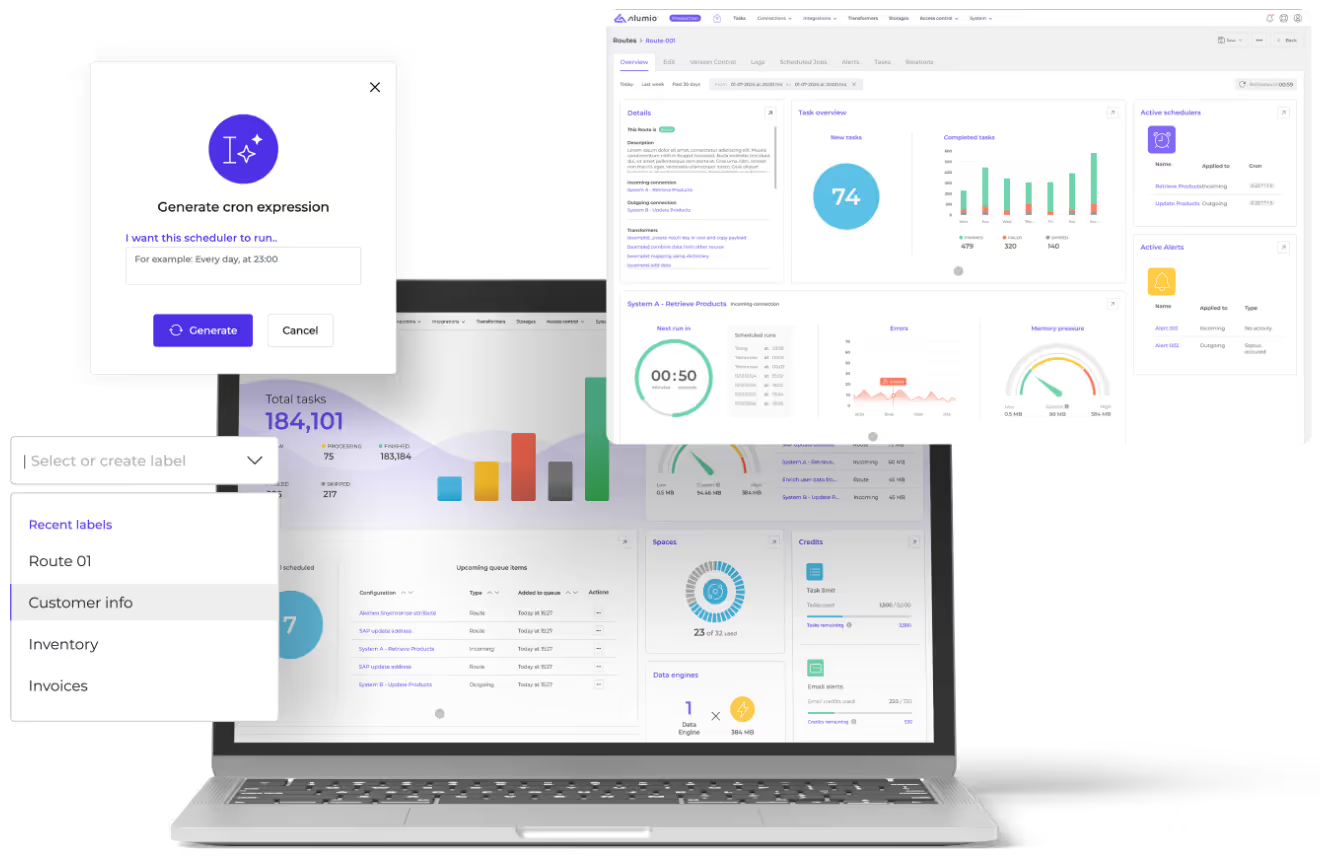

Discover the Alumio architecture & performance!

Designed to maximize automation and flexibility!

Alumio delivers a horizontally and vertically scalable high-performance, cloud-native infrastructure that acts as a central hub to govern and orchestrate integrated systems, data, and processes. It helps process thousands of transactions per second and supports thousands of hosted cloud-native Alumio environments.

The performance benefits

of the Alumio iPaaS

Robust storage and queuing system

Data packages as ‘in-process data’ are temporarily stored in our robust queuing system, depending on type transformation and chosen Alumio package into MySQL, Elastic, Apache spark, Google GCP, or Amazon’s Redshift.

They are used to guarantee processing at scale for all the individual pages of data in transit. If any system goes offline, the architecture above allows for elegantly pausing and resuming flow-processing activities without loss of data.

Big Data

Alumio is built as a high-performance integration platform to help external applications to be connected, and to handle big data. Data is transformed into smaller packages called ‘Alumio tasks’ and can flow through our system in a scalable manner into externally connected applications via our API, supported by our robust queuing mechanism.

Quality control

The Alumio monitoring system can recognize field errors. If additional health workflows are configured, it can automatically remove these fields from API retry requests so that critical integration flows do not fail due to field-level data errors.

Errors that cannot be automatically recovered are displayed on a user-friendly dashboard, and users can troubleshoot these for a certain period, manually modifying and retrying failed records.

Authentication

Alumio can recognize expired or invalid API credentials and automatically take connection resources offline. When a connection goes offline, Alumio's monitoring recognizes failed tasks. Additional workflows can be created to pause all related integration flows that are in progress. New flows will then, not be scheduled, and the offline connection will be placed into an automated recovery procedure. Then, once the connection comes back online, all related integration flows will resume processing where they left off and new flows that did not run will be scheduled.

Health monitoring

Alumio health monitoring can recognize when integration flows miss their last scheduled run due to a downtime event. It will automatically re-schedule flows, which run immediately after the interruption has been resolved. Alumio has the resilience to recognize intermittent network errors and automatically retry them.

Limitations

The Alumio has no practical limits within a SMB Alumio private cloud account regarding:

Number of applications that can be connected.

Number of flows that can be defined.

Number of flows that can run in parallel.

Number of records that can be processed.

The size of data that can be processed.

Alumio limitations are based on the amount of requests per minute (or second). Our enterprise application is horizontally and vertically scalable based on the given infrastructure.

DevOps

Alumio has a full DevOps team monitoring the Alumio platform 24/7. The DevOps team has people in multiple locations and each team member is fully equipped to work remotely or from an Alumio office.

Using code standards

The Alumio core team has defined a software development process to ensure that Alumio maintains scalability and reliability, and is 100% available. The SDLC (Software-Development Lifecycle) is the process that is followed for each Alumio software (component) project. Each project consists of a detailed plan describing how to develop, maintain, replace, and alter or enhance specific software. This methodology ensures the quality of the Alumio iPaaS.

The advantages of Alumio OpenAPI

Simple interface

Authentication

API mocking

Lifecycle API management

A Symfony-based iPaaS

The integration benefits of the Alumio architecture

Integrate two or

multiple systems

Extensive integration capabilities

Both synchronous and asynchronous

Alumio iPaaS data entities

Don’t reinvent the wheel

Use the software in their strengths

Implementing a Hexagonal design

Why is the Alumio iPaaS the preferred solution for developers?

Discover why system integrators prefer the Alumio iPaaS

Get a free assessment of your integration needs