Integrating manufacturing data: sync, async, batch, and event-driven

Before choosing a specific integration pattern to process manufacturing data, the first question is usually about timing. Some manufacturing integrations need a direct answer before the next step can happen. Others can send data and continue processing without waiting. That is why manufacturing integrations fall under either the synchronous or asynchronous data exchange categories.

Synchronous vs asynchronous: the foundational choice

In practice, manufacturing environments need both these integration patterns (sync and async). The key is applying the right pattern to the right workflow:

Synchronous communication requires the requesting system to pause and wait for a direct response from the receiving system before continuing. This guarantees immediate data validation but creates a dependency: if the receiving system is slow or unavailable, the requesting system is blocked until it times out.

Asynchronous communication allows the requesting system to send a message and continue its operations without waiting for a response. The receiving system processes the data at its own pace. This prevents blocking and improves throughput, but requires more careful error handling to catch failed or delayed transfers.

Understanding this distinction is the starting point for designing a reliable manufacturing data architecture. The five patterns below each sit within one of these two models.

When to use the 5 integration patterns in manufacturing operations

1. Request/reply for critical validations

The request/reply pattern is the clearest example of synchronous communication. One system sends a request, waits for a response, and only continues once it has received the information it needs.

This pattern is useful when a process cannot proceed safely without confirmation.

Example use case: A production system may need to verify material availability before releasing a work order. The ERP sends a request to the warehouse or inventory system, waits for the current stock status, and then decides whether production can move forward.

Why it matters: Request/reply helps ensure immediate validation, but it also introduces dependency. If the target system is slow or unavailable, the requesting system is delayed as well.

2. Fire-and-forget for non-blocking machine and sensor data

Fire-and-forget is a common asynchronous pattern in which one system sends data and continues operating without waiting for a response.

This is well suited to high-volume data flows where the sender should not be blocked by network or processing delays.

Example use case: A PLC or IoT sensor can send temperature, vibration, or machine-state data to a central logging or analytics platform without waiting for confirmation that every message has been processed.

Why it matters: This pattern supports throughput and avoids slowing down machinery or edge devices with unnecessary waiting. The tradeoff is that reliability depends more heavily on the middleware or queueing layer handling delivery correctly.

3. Batch processing for large-volume operational and financial data

Batch processing groups data over a defined period and transfers it at scheduled intervals instead of sending each record immediately.

This is still highly relevant in manufacturing, especially for processes that do not require live action.

Example use case: A manufacturing execution system may collect labor hours, material consumption, and production yield throughout a shift and then transfer that data to the ERP at the end of the shift or overnight for reconciliation and reporting.

Why it matters: Batch processing reduces constant load on systems and is efficient for large data volumes. The tradeoff is latency: the receiving system does not see those updates until the next scheduled run.

4. Event-driven architecture for multi-system reactions

Event-driven architecture is an asynchronous model in which one system publishes an event, and multiple subscribing systems react to it.

Instead of hard-wiring systems together directly, the architecture allows multiple downstream processes to respond to the same operational trigger.

Example use case: When a machine fault occurs, that event can be published once and then used to trigger different actions in multiple systems. Maintenance software can create a service ticket, ERP can adjust production planning, and customer-facing systems can flag possible delivery delays.

Why it matters: This pattern is highly scalable and flexible because new subscribers can be added without changing the publishing system. It is particularly useful in manufacturing where one operational event may need to trigger multiple business responses at once.

5. Real-time API-based synchronization for core system visibility

Real-time API-based synchronization is the pattern most people think of when they talk about connected operations. The goal is to update core systems as close to immediately as practical when operational data changes.

This is especially useful when visibility across systems needs to stay current.

Example use case: When raw materials are received into the warehouse system, that update can be pushed immediately to ERP so procurement, planning, and production teams can act on current availability.

Why it matters: Real-time API-based synchronization improves visibility and responsiveness, but it also places more demands on integration reliability, security, and monitoring. It works best when supported by a platform that can manage those flows consistently.

Why no single integration pattern is enough in manufacturing

No single integration pattern addresses every manufacturing workflow. A resilient manufacturing technology stack typically uses all five, with each pattern assigned to the processes it fits best.

Request/reply handles validations where a process genuinely cannot proceed without confirmed data. Fire-and-forget supports high-throughput telemetry where blocking is unacceptable. Batch processing consolidates large datasets efficiently during off-peak windows. Event-driven architecture coordinates multi-system responses to operational events. Real-time API patterns keep inventory, order, and production data synchronized across core platforms.

Managing this combination reliably through custom scripts and point-to-point connections creates technical debt that compounds as the system landscape grows. This is where an integration platform becomes especially valuable.

How an integration platform helps manage all five patterns

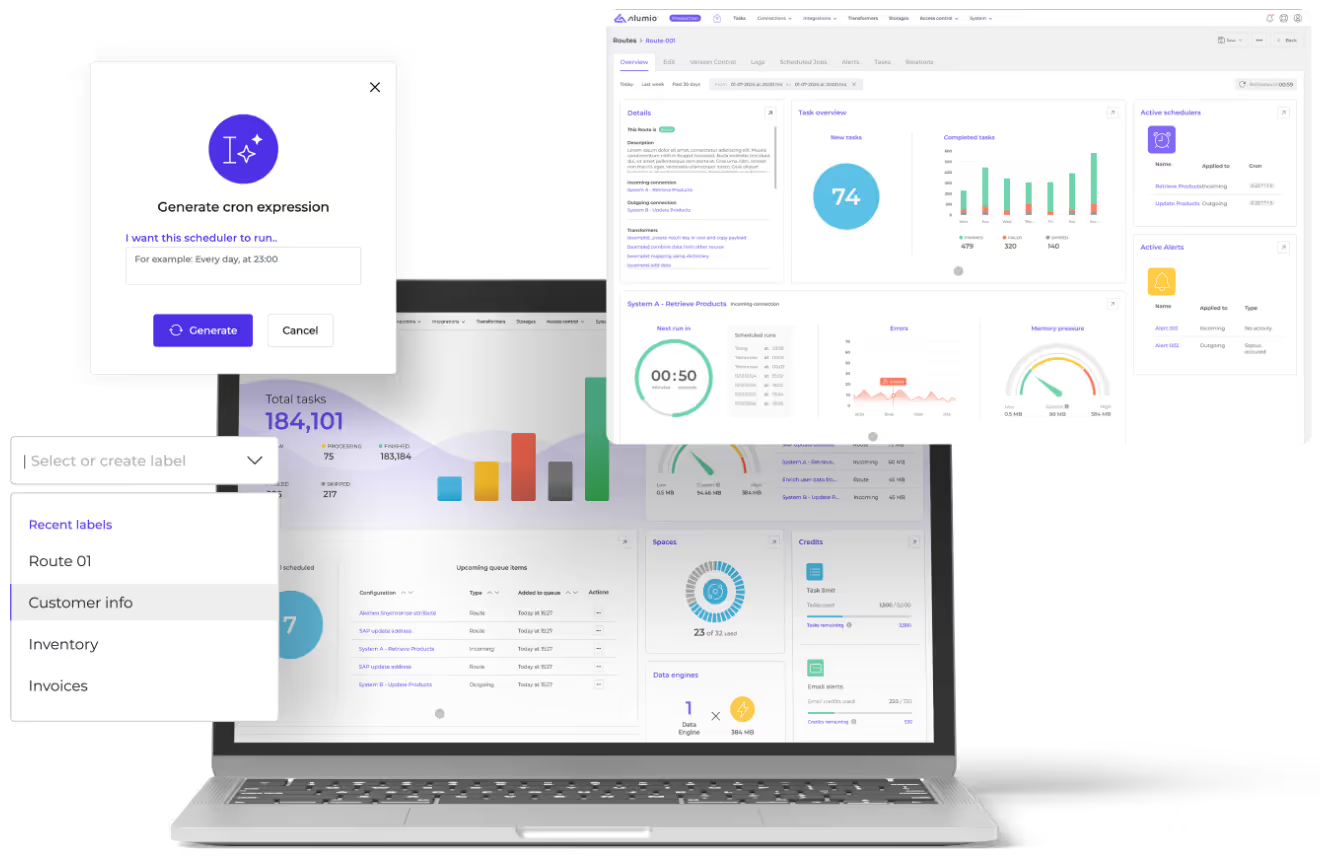

A centralized integration platform-as-a-service (iPaaS) like Alumio provides the infrastructure to configure, monitor, and govern all five patterns from a single interface, with the visibility to identify where failures occur and the flexibility to adapt as systems change.

Platforms like Alumio are built for exactly this kind of multi-pattern manufacturing environment, connecting ERP, MES, WMS, EAM, and other operational systems through a governed integration layer that supports whichever data flow each workflow requires. Instead of relying on fragile custom code between systems, manufacturers can use the Alumio integration platform to manage synchronous and asynchronous communication, real-time and batch processing, and different routing patterns through one central layer.

That matters because manufacturing integrations rarely stay simple. As more systems are added, the challenge is not just connecting them once. It is being able to monitor, adapt, and govern different data flows over time without turning the architecture into a maintenance burden.

Building a more resilient manufacturing data architecture

Manufacturing operations do not need one universal integration pattern. They need the flexibility to use different patterns where they fit best, whether that means request/reply for critical validation, fire-and-forget for telemetry, batch processing for large-volume back-office data, event-driven architecture for coordinated responses, or real-time API-based synchronization for live operational visibility.

What matters is not forcing every workflow into the same model, but having one central integration platform to manage them all reliably. That is where Alumio helps. By providing a governed integration layer, Alumio enables manufacturers to orchestrate different integration patterns across the same landscape without relying on disconnected custom scripts or hard-to-maintain point-to-point logic. The result is a more adaptable and resilient data architecture built for operational continuity.